I hope the title hasn’t misled you. I’m afraid this post is about probability, not Pamela Anderson.

It won’t be too technical, and will draw on a really important real-life application. Take the example of routine screening for breast cancer, where all women of a certain age are given a mammography to test for the disease. Imagine you were given the following information:

The probability that one of these women has breast cancer is 0.8%. If a woman has breast cancer, the probability is 90% that she will have a positive mammogram. If a woman does not have breast cancer, the probability is 7% that she will still have a positive mammogram. Imagine a woman who has a positive mammogram. What is the probability that she actually has cancer?

Don’t worry if this leaves you hopelessly confused – so was I when I first encountered this problem. I guessed an answer of around 90% – after all it says if you have cancer there is a 90% chance you’ll get a positive mammogram. But that’s the probability of you having a positive test given that you have cancer. The question asks, what is the probability of you having cancer given that you have a positive test. It turns out there’s a huge difference between the two.

The difference is that sometimes you can have cancer and still get a negative test (this is a false negative). If 90% of those with cancer get a positive test, that still leaves 10% of those with cancer getting (wrongly) the all clear. You can also not have cancer and still get a positive test (this is a false positive). As it says, 7% of those without cancer will be told they have it. No test is a 100% accurate; human error can creep in and samples get mixed up, the technology malfunctions etc.

One way of making the problem a little easier to solve is to eschew the probabilities and %’s, which people find hard to understand, and just count the number of times things happen. If we translate our above problem as follows using frequencies:

8 out of every 1,000 women have breast cancer. Of these 8 women with breast cancer, 7 will have a positive mammogram. Of the remaining 992 women who don’t have breast cancer, some 70 will still have a positive mammogram. Imagine a sample of women who have positive mammograms in screening. How many of these women actually have breast cancer?

This is exactly as before. But we say 8 out of 1,000 rather than 0.8%, even though they mean the same thing. Reading this, it becomes easier to solve the problem. Counting those who get a positive mammogram, we see there are 7 + 70 = 77. But only 7 of these have cancer. So the chances of you having cancer if you get a positive mammogram is 7/77 or about 9%.

This is quite small. Why? Well, even though 90% of those with cancer got a positive test, there was only a small number with cancer to begin with (8 in fact). A large percentage of a small number still gives you a small number. Meanwhile only 7% of those without cancer got a positive test. But there were a large number of women without cancer (992). A small percentage of a large number gives you a large number. It was this large number that swamped the small number – the number of false positives outnumbered the true positives, and so only a small proportion of those with positive tests actually have the disease.

So this is immensely important. If ever you get a positive test for a disease, remember it doesn’t necessarily follow you have the disease. Depending on the number of false negatives and positives, you might have a very small chance of actually having the disease you’ve tested positive for. Many people’s world’s have fallen apart because they have been told they test positive for a disease, be it breast cancer or HIV. But they, or their doctors, never stop to think that many people can still get a positive test even though they don’t have the disease. In our example above, most of the women with positive tests didn’t have the disease (70 without cancer versus 7 with). If this was explained to them, they could realise that a positive test is not conclusive. They could find out a bit about the probabilities that you actually have the disease or that the test has misled you in this case, and maybe ask for more tests.

Either way, you know that a positive test shows you have the chance of having the disease, it’s not a death sentence. You’d feel a lot better knowing that a positive mammogram meant there was a 9% chance of having breast cancer, not a 90% one.

Unfortunately, research has been taken on how many doctors understand all this reasoning, and scandalously few do. They studied medicine, not statistics after all. If ever you get a positive test, press your doctor on these points to ensure you are getting the right information.

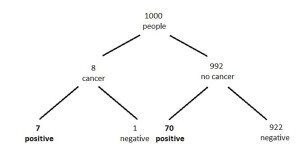

A picture tells a thousand words: Sometimes pictures help work these problems out. One can draw a tree diagram:

This shows more clearly the 77 with positive tests, of whom only 7 have cancer.

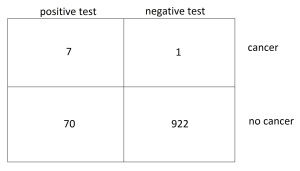

You can also ‘think inside the box’:

A little bit more: I realise I haven’t yet explained the title. The type of probabilistic thinking used in this post is called Bayesian probability, named after Thomas Bayes, a Presbyterian minister who did work in mathematics. I won’t go into too much detail (I don’t understand a lot about it myself), but it gives a way of telling us how we should update our beliefs given the evidence we find.

In the above breast cancer screening example, we wanted to find how much the evidence (the mammography test) should lead us to believe we had cancer. It can be applied to any of our beliefs and the evidence we hold for them.

The example I’ve used comes from an excellent book, Gerd Gigerenzer‘s Reckoning with Risk: